The Firm That Forgot How to Think: The Competitive Risk Your Board isn’t Discussing

Remember when we worried that Google would make us shallow thinkers?

Our brain capacity is finite – so augmentation and amplification is inevitable – but augmentation in the way that we add another floor to an existing building: it requires a strong foundation to safely handle the additional load. This is not the same as using a calculator for arithmetic we could do in our heads. The distinction is whether you’re extending capability, or atrophying it by replacement.

The technology itself is neutral. The distinction lies entirely in intent: are we using AI to bypass the hard work of learning, or to extend and accelerate our capacity to be curious, insightful, and skilled?

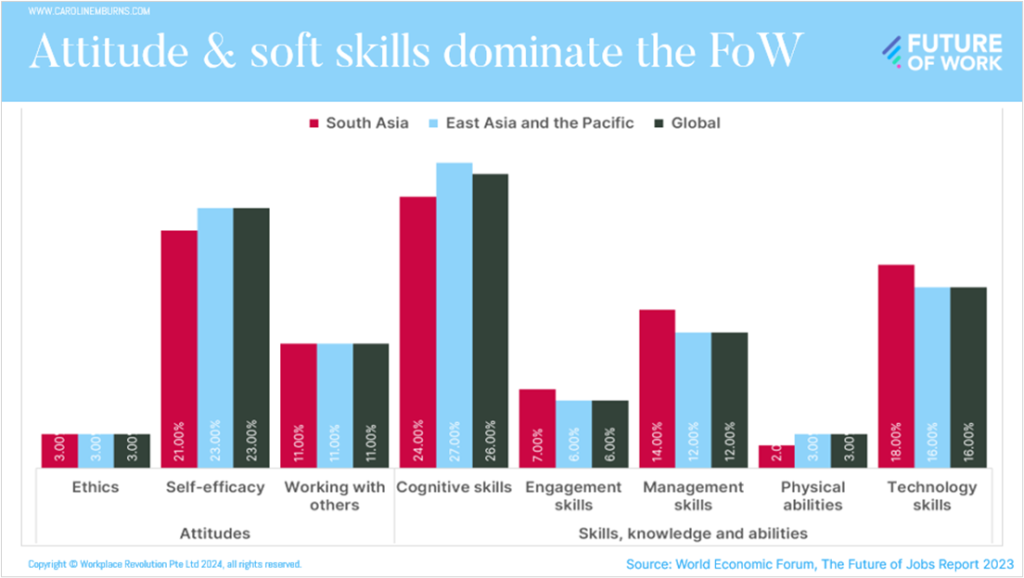

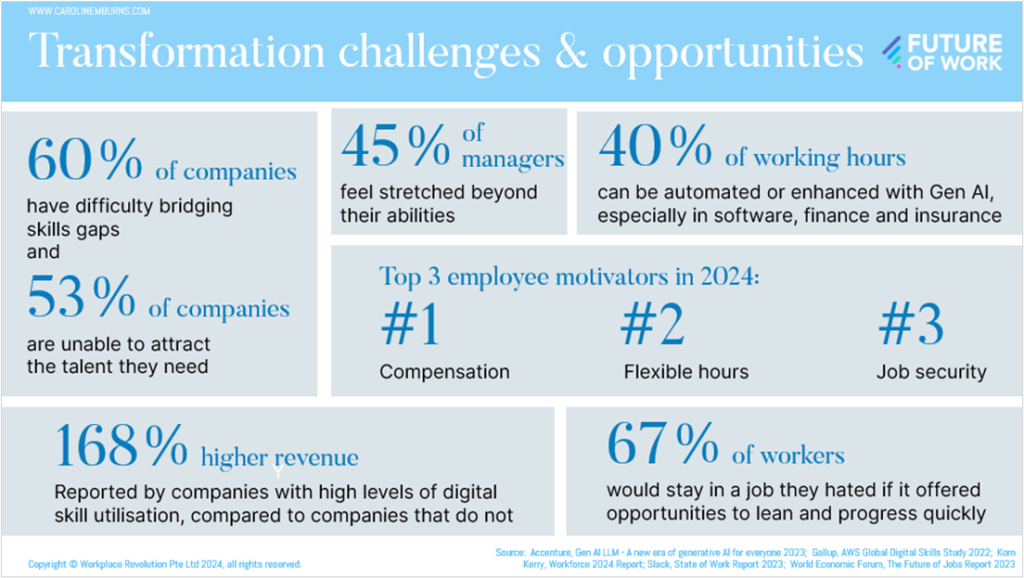

Because all organisations rely to some degree on human capabilities – intellectual, emotional and physical. This is amplified in professional services and creative businesses such as management consultants, lawyers, accountants, engineers, and advertising agencies. Since employee-related costs typically account for at least two-thirds of operating costs for a professional services firm (PSF), claims that AI agents can accelerate project timelines by 40% to 50% and reduce costs by over 40% create a stark choice between competitiveness and quality.

According to Marty Chavez, former CFO, CIO and global co-head of securities at Goldman Sachs, it’s a mistake for professional services firms to say “How am I going to defend business against AI?” – the better question is how they can ”reconceive what it means to deliver those services.”

Case in point: McKinsey is rolling out its proprietary AI platform Lilli across 45,000 employees, enabling consultants to do “in minutes what it would have taken them weeks to do.” The efficiency gains are real. But so are the risks.

Last year, Deloitte agreed to refund part of a $440,000 consultancy fee to the Australian government after a report it delivered included AI-generated fabricated academic citations, false references, and a quote wrongly attributed to a Federal Court judgment. Deloitte maintains the substantive findings were unaffected. Clients and courts are increasingly unlikely to accept that distinction.

This article is not a board primer on AI risk and opportunity — there are plenty of those.

It is focused on a slower, quieter risk: that in our urgency not to be left behind, we gradually unravel the very thing that makes our firms worth choosing. Our people. And in doing so, we blur our identity as an organisation — indistinguishable not just from competitors, but from every other AI-assisted business in every other sector.

My aim is to widen the perspectives of the boards, leaders, and business owners I work with — so that AI is not seen merely as something requiring a policy, but as a tool that can amplify your firm’s unique competitive advantage. Getting that right may take years, and its value may only become clear in hindsight. Which is precisely what boards are for.

To do that, I will first recap the key business risks of AI integration in knowledge-based firms — in particular the emerging concern around cognitive outsourcing. Then I will set out the strategic considerations for AI stewardship that genuinely intensifies the value of your people, the work they do, and their engagement with it.

The Fragility of Expertise in the Age of AI

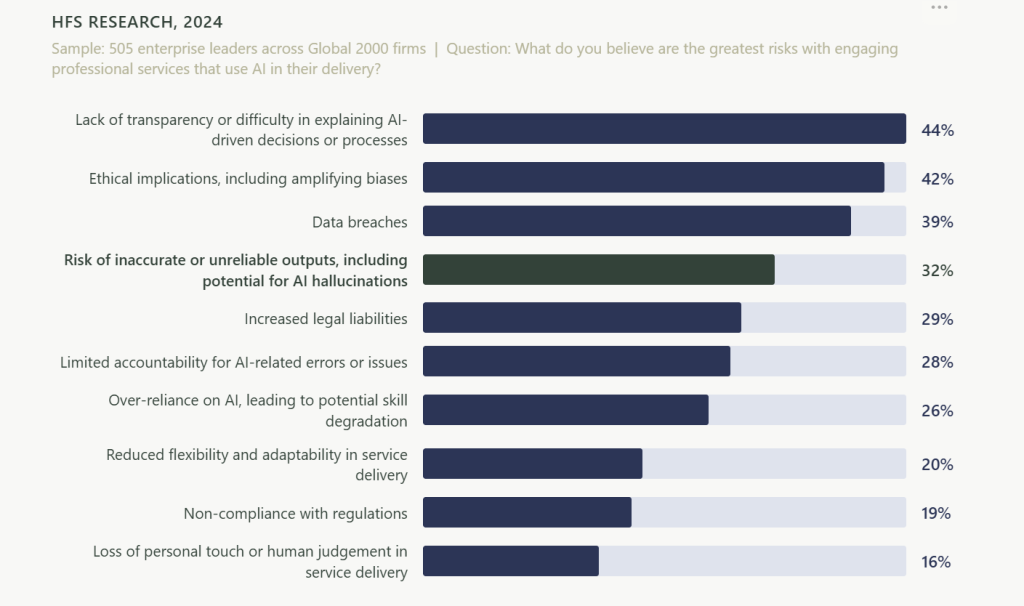

For firms relying on knowledge workers AI integration presents four critical risks: from professional malpractice and reputational damage, to the long-term erosion of expertise, and the breakdown of traditional talent development.

1. Inaccurate Deliverables and Professional Malpractice

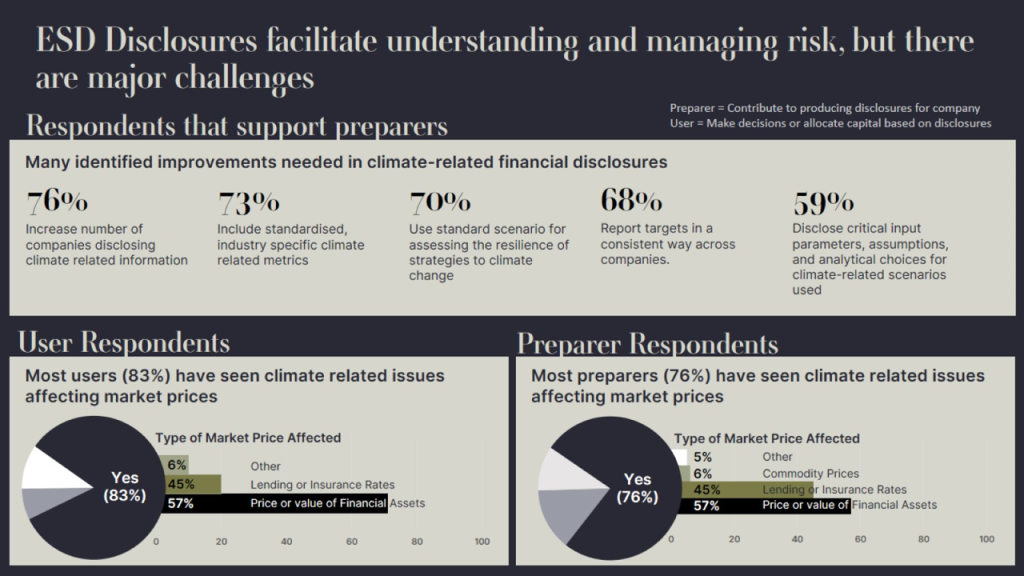

Approximately 60% of firms recently surveyed have no AI governance plan, leaving them vulnerable as courts and governments begin introducing binding standards and AI-usage clauses in contracts. The Deloitte case – a $290,000 lesson in what happens when professional judgment is replaced by blind trust in AI – is not an isolated warning. Failure to disclose AI use can lead to fee refunds, court sanctions, and fines.

There is also a subtler risk: employees may not have the expertise you believe they do. Research shows 90 percent of job seekers who used AI to craft resumes said they felt confident applying for roles they weren’t qualified for. Their work is faster, but surface-level. Where nuance, originality, or judgment is required, the capability is not there.

2. Erosion of Trust and Authenticity

Clients pay premium rates for human insight and unique perspective, but when individuals replace professional judgment with uncritical trust in AI, this is cognitive outsourcing, and risks professional malpractice. However it is not the same as intellectual misrepresentation. If I ask AI to produce a report and I sign my name to it as though it were wholly my reasoning, that is misrepresentation.

Over-reliance on AI in client communication can also make professional relationships feel impersonal, and transactional. Clients may eventually value a firm less if they perceive the advice they receive as formulaic or soul-less.

The blurred motivations within AI also present risk. Stuart Russell, professor of computer science at UC Berkeley, and co-founder of the International Association for Safe and Ethical AI, warned in his TIME100 AI speech that we have no idea whether training large language models to imitate humans results in the AI absorbing human-like motivations – such as self-preservation and self-empowerment – and pursuing those goals independently.

3. Erosion of Focus, Professional Judgment and Complex Reasoning

Judgment and finesse are developed by thinking through choices, debating alternatives, and observing outcomes. Relying on AI for ideas, analysis, and decisions sacrifices the learning gained from that process – leaving professionals unable to explain or defend choices they did not actually make.

Unfortunately there is growing evidence that consumption of AI-derived content is contributing to a decline in reading comprehension and sustained focus. Even at elite US colleges, many students now arrive unprepared to read a complete book, interpret a poem or follow a complex argument.

Writing, too, is a form of thinking. Outsourcing it prevents people from learning to express themselves – or discovering what they want to say – because they lose the ability to distinguish their authentic voice from the formulaic.

All of this compounds into cognitive atrophy.

4. Loss of Tacit Knowledge

AI is highly effective at automating the codified, checkable tasks (the “bottom rung”) traditionally performed by entry-level workers, reducing demand for early-career workers in AI-exposed fields. But AI does not just remove tasks.

“AI is steadily eating away at the training ground that entry-level work used to provide….[The risk is] building a generation of workers who are credentialed but unseasoned, creative but untested.” (Adam Monago, The Missing Rung: AI and the Vanishing Entry-Level Job, 22 September 2025).

Past generations learned judgment by doing: rewriting a draft for the third time, reconciling a messy spreadsheet, shadowing a mentor through a difficult client meeting. If young workers are not tasked with these foundational activities, they may never develop the tacit knowledge they need to become the next generation of experts: the unwritten rules, cultural context, and complex judgment that cannot be taught in a classroom or generated by a prompt.

By 2030, many professions may face a shortage of senior leaders capable of operating below the AI abstraction layer – a generation of “architects who have never laid a brick.”

Practical Strategies for Firms: Protecting and Amplifying the Value of Your Expertise

To mitigate the risks of AI integration eroding competitive differentiation, innovation capacity, and sustainable talent regeneration,

we must shift the internal conversation in the organisations we govern, from “producing the same or more with less”, to “producing superior output with the talent we have.”

Here are three ways you can protect your firm against declining standards of value creation, and increased reputational and financial risk.

1. Governance Controls

- Mandatory disclosure: Boards should enforce a “default to transparency” mindset — discovery of hidden AI use through audits or leaks can permanently destroy client trust. Make AI disclosure mandatory in all client contracts, specifying which tools are used, and require attestations of human review for high-stakes deliverables.

- Separate creation and review: Establish a procedural or physical separation between the individual prompting the AI and the person validating the output. Fresh eyes are essential for catching AI hallucinations that the original drafter may overlook due to anchoring bias.

- Traceability standards: Every claim or citation in a report must be traceable to human-verifiable sources – to prevent the submission of fabricated evidence or invented court references.

- Consistent methods: Consider establishing a reusable library of blueprints and standardised criteria to avoid “uncontrolled agent sprawl” and support internal audit trails.

- Escalation and crisis protocols: Boards must define clear escalation protocols for AI failures: who investigates, who notifies the client, and how to remediate it.

2. Performance and Motivational Alignment

- Shift from headcount to outcome metrics: Boards must expand success measures beyond cost-savings to include competitive advantage and quality of output – ensuring firms do not cannibalise their transactional work at the expense of long-term expertise. Reward employees for skill and judgment, not just speed.

- Redefine performance management: Include metrics for AI leadership and supervision – for example, how effectively an employee tracks, challenges, and corrects AI-generated work.

- Ethics and literacy mandates: Mandatory AI literacy training should ensure staff can recognise the “illusion of thinking” in complex reasoning models and understand model-specific limitations.

- Bias recognition and reflection: Professional services firms are already using generative AI evaluation tools that help managers recognize their own biases and synthesise feedback more accurately. Reflection prompts after complex decisions – “What was your most difficult moment?” or “Where were you uncertain?” – can build intuition and risk tolerance over time.

3. Safeguarding Tacit Knowledge

I consider this the most critical – and most overlooked – strategic response to AI integration at board level. The savings from automating tasks and processes can be material and measurable. The cost of eroding your firm’s knowledge and hands-on learning is delayed, but potentially catastrophic.

To mitigate the risk of the “bottom rung” of the career ladder breaking in your firm, work with HR on strategies that actively build tacit knowledge – especially for early-career employees – to ensure that the judgment, cultural context, and unwritten rules required for senior roles are sustained over time.

- Treat knowledge continuity as a risk management issue: At board and leadership level we are focused on risk mitigation, including reputational, safety, privacy and business continuity. Knowledge continuity deserves the same approach. Structured offboarding and mentorship programs designed to transfer expertise rather than just tasks, and graduate apprenticeship models can extend and preserve institutional knowledge before it disappears.

Start by asking team leaders and managers to identify “high-value moments” of knowledge transfer in their work – project retrospectives, complex handoffs, problem-solving discussions. These interactions expose unrecorded workarounds, expert intuition, and the hidden rationale behind complex decisions to less experienced team members.

- Redesign entry-level roles around AI supervision not just AI use: Before redesigning roles around automated tasks, map the cognitive skills these tasks were previously building for early-career employees. Ask what structured replacement will build the same judgment.

As AI becomes increasingly relied on for basic tasks, new entry-level programs should not be primarily about producing output – they should be about evaluating it. Structured, AI-augmented apprenticeships, where new-starters oversee, test, and correct AI output and manage escalations, train early-career workers on real consequences and complex downstream problems. This increases their value as a necessary complement to automation, and builds the experience base required for more senior roles in future.

- Formalise and structure mentorship – don’t just assign a mentor: According to Gallup, employees with formal mentors are 75% more likely to strongly agree their organisation provides a clear plan for their career development, compared to those with informal mentors. Unstructured mentorship risks missing the why, as employees are mostly exposed only to the what of the situations and conversations around them. Every senior professional should be expected to mentor junior colleagues as a core job responsibility. This requires equipping experienced professionals with the skills to transfer tacit knowledge, give effective feedback, and create genuine learning opportunities.

- Flip the mentorship model intentionally: Research shows nearly two-thirds (62%) of Gen Z employees are actively helping senior colleagues upskill in AI – continuing the reverse digital mentoring that first emerged between millennials and their managers in the early 2000s. Both people benefit: 72% of Gen Z respondents said their AI skills have improved team productivity, while 57% of senior colleagues reported having more time for strategic work as a result. Importantly, this approach also acknowledges younger employees’ value and gives them a compelling reason to stay engaged.

- Use developmental tasks and smart coaching : Project management tools such as Jira can incorporate smart coaching to suggest stretch tasks, such as recommending a team leader assign a graduate to stakeholder communications once they have mastered analysis reporting. In coding-related roles AI-driven exercises can simulate real code changes, requiring trainees to identify logic flaws or insufficient testing.

Conclusion

The firms that will lead in 2035 are not the ones that cut costs fastest in 2025. They are the ones that used this moment to deepen their knowledge infrastructure, strengthen their talent pipeline, and build a culture in which AI amplifies human capability instead of replacing it.

As Tali Sachs has observed, companies risk training a generation of professionals who know how to get answers, but not how to question them.

Imagine the alternative: a pipeline of talent that grows from entry level through to expert, leader, mentor, and role model – building social, judgment-based, and technical skills at every stage of that journey.

That pipeline does not build itself. One well-structured program, consistently applied, can ripple across an entire workforce.

The boards that ask hard questions about knowledge continuity today are the ones whose firms will still have genuine expertise – and genuine competitive advantage – a decade from now.

If this feels worth exploring further, I’d welcome the conversation.

Caroline M Burns

This article was originally published in the April edition of my newsletter The Regenerative Edge.

Future of Work, Future-focused Leadership, Strategic Competitive Advantage, Transformation and Technology